Empusa

The development of ...

During the development of the GBOL ontology it became increasingly more difficult to handle the changes made in the ontology. We had developed RDF2Graph in the past to reveal the structure of a semantic database but was only sufficient once a database was created. To enforce parsers to cohere to the ontology we required a more advanced solution in the form of an API generator based on an ontology.

To manage the large variety of properties and classes in an easy to use format we have developed Empusa as part of the GBOL Stack. Empusa is a java application which converts OWL/Shex like ontologies into an API for Java and R + an ontology website.

As an example, for the GBOL ontology, alone empusa generates from a 4000 line ontology a JAVA api of 50.000 lines, R api of 12.000 lines and an OWL and ShExC file of 12.044 and 3202 lines and this website you are currently viewing.

The input file for Empusa is a combination between OWL and a simplified version of ShEx, which can be edited within for example Protege.

The classes are defined in OWL, whereas the properties are defined in each class under the annotation property ‘propertyDefinitions’ encoded within a simplified format of the ShEx standard.

Additionally predefined value sets (for example all article types) can be defined by adding a subclass to the EnumeratedValueClass. Each subclass of the value set is represented as one element within the value set.

All together Empusa shortens the development cycle, eases the development, consistency and maintenance of GBOL and its associated framework as it generates all the elements from one single entity.

Using Empusa

To be able to use the Empusa application, the ontology or input file needs to be written in a combination of OWL/Shex of which structural examples are given below using Protégé.

Obtaining Empusa

Binary

The Empusa code generator can be obtained from here. The EmpusaCodeGen.jar can be directly used to convert a defined OWL/Shex ontology into the corresponding API / Shex / OWL / Documentation files.

Code base (Advanced)

If you are interested in the further development of Empusa you can access the code base and the various modules at:

https://gitlab.com/Empusa/Empusa

The application can be installed through the following command which is located in the folder obtained.

./install.sh install

Getting started - Building your own ontology

Example ontology

An example of an ontology project can be found at:

https://gitlab.com/Empusa/ExampleOntology

And to obtain it through git you can run the following command:

git clone https://gitlab.com/Empusa/ExampleOntology

There is one ontology (turtle) file located in this cloned project:

- ExampleAdditional.ttl

- example-ontology.ttl

The example-ontology.ttl file contains the ontology. We used protege to create this ontology file and can therefore be easily opened with protégé.

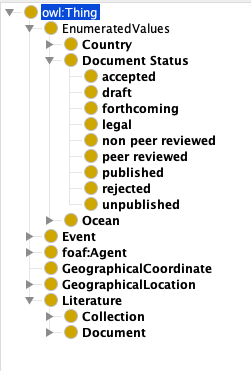

In the following image an overview of the example ontology is given.

The root of the ontology is an owl:Thing in which all the other subclasses are defined in.

An important class for the API is the EnumeratedValues. Within this class a limited selection for a specific property can be defined. For example when a class has the property country it should be defined as:

#* The country

country type::Country;

This makes sure that the predicate country can only choose from the list of contries available in the subclass Country of the EnumeratedValues. This to ensure strict coherence to an accepted naming scheme.

Linking to other classess can be done via

bibo:presents @bibo:Document*;

bibo:organizer @foaf:Agent*;

bibo:place xsd:String*;

In which the @bibo:Document points to the

Document class located under Literature in the same ontology file and the organizer points to an Agent class. The @bibo: makes use of the predifined prefixes in which bibo corresponds to http://purl.org/ontology/bibo/.

To define other types such as String, integer, date, etc... the following way of writing is used:

bibo:shortTitle xsd:String?;

dc:created xsd:date?;

bibo:numPages xsd:Integer?;

To strict the number of values a specific predicate can have the * ? + = $\sim$ symbols are used where * denotes 0..N, ? 0..1, + 1..N. The = and ~ sign can be used to define the references be stored as an ordered list to ensure that the elements are numbered.

For a more complex ontology written in this format have a look at the gbol-ontology.ttl in the GBOL git directory.

Generating the API

Once you have defined (or a part of) your ontology the API can be created. This is achieved through the EmpusaCodeGen.jar.

java -jar EmpusaCodeGen.jar

The following options are required: [-o | -output], [-i | -input]

Usage: <main class> [options]

Options:

--help

-rg, -RDF2Graph

File to write RDF2Graph file

-r, -Routput

The directory into which the R project should be generated

-sC, -ShExC

Generate ShExC file

-sR, -ShExR

Generate ShExR file

-doc

Generate a documentation page

-eb, -excludeBaseFiles

Do not overwrite the base project and pom files

Default: false

* -i, -input

The additional file followed by the ontology to use

-jsonld

Generate json framing file

* -o, -output

The directory into which the project should be generated

-owl

Generate official OWL file

-sNP, -skipNarrowingProperties

RDF2Graph export skip property already defined in parent class

Default: false

* required parameter

For example to build the Example ontology:

java -jar EmpusaCodeGen.jar -i ExampleAdditional.ttl -i example-ontology.ttl -o ./MyJavaApi -owl ./file.owl -ShExC ./file.shex

This creates a MyJavaApi folder in which all the source code files and gradle build files are located. You can immediatly compile the java code into a jar package such that you can more easily integrate this as a dependency on an existing code base using the install script provided.

cd ./MyJavaApi && ./install.sh

Using the API is explained in the Usage section